This will be a quick blog to demonstrate how to enable the (embedded) Harbor Image Registry in vSphere 7 with Kubernetes. Harbor was originally developed by VMware as a enterprise-grade private container registry. It was then donated to the CNCF in 2018 and recently became a CNCF graduated project. For this demo, we'll activate the … Continue reading Enabling embedded Harbor Image Registry in vSphere 7 with Kubernetes

Month: August 2020

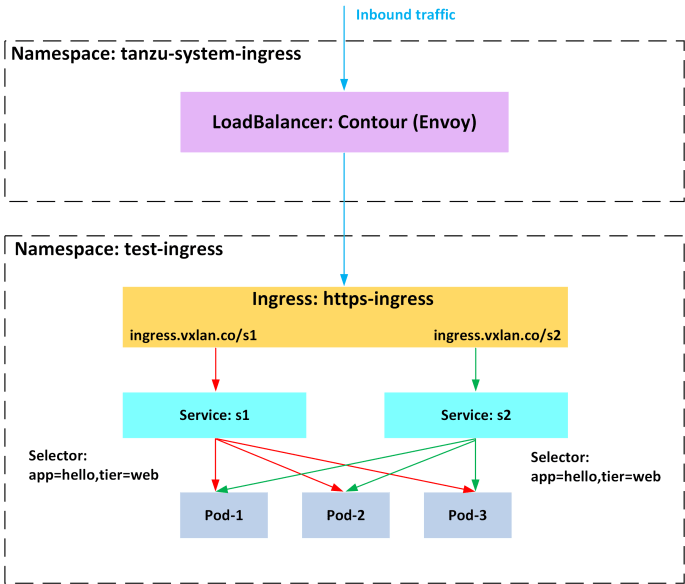

Deploying Contour Ingress Controller on Tanzu Kubernetes Grid (TKG)

This blog provides a guide to help you deploying Contour Ingress Controller onto a Tanzu Kubernetes Grid (TKG) cluster. Contour is an open source Kubernetes ingress controller that exposes HTTP/HTTPS routes for internal services so they are reachable from outside the cluster. Like many other ingress controllers, Contour can provide advanced L7 URL/URI based routing … Continue reading Deploying Contour Ingress Controller on Tanzu Kubernetes Grid (TKG)