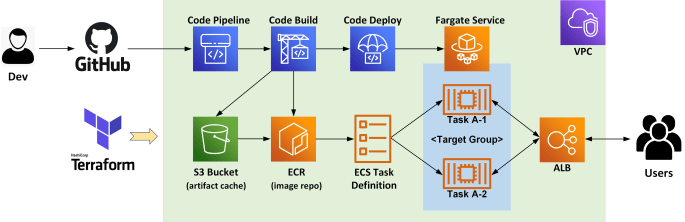

This blog provides an example for deploying a CI/CD pipeline on AWS utilising the serverless container platform Fargate and the fully managed CodePipeline service. We'll also use Terraform to automate the process for building the entire AWS environment, as shown in the below diagram. Specifically, we'll be creating the following AWS resources: 1x demo VPC … Continue reading Build a Serverless CI/CD pipeline on AWS with Fargate, CodePipeline and Terraform

Month: June 2020

Cloud Native DevOps on GCP Series Ep3 – Use Terraform to launch a Serverless CI/CD pipeline with Cloud Run, GCR and Cloud Build

This is the third episode of our Cloud Native DevOps on GCP series. In the previous chapters, we have achieved the following: Built a GKE Cluster with TerraformCreated a CI/CD pipeline with GKE, GCR and Cloud Build This time, we will take a step further and go completely serverless by deploying the same Node app onto the … Continue reading Cloud Native DevOps on GCP Series Ep3 – Use Terraform to launch a Serverless CI/CD pipeline with Cloud Run, GCR and Cloud Build

Cloud Native DevOps on GCP Series Ep2 – Create a CI/CD pipeline with GKE, GCR and Cloud Build

This is the second episode of our Cloud Native DevOps on GCP series. In the previous chapter, we have built a multi-AZ GKE cluster with Terraform. This time, we'll create a cloud native CI/CD pipeline leveraging our GKE cluster and Google DevOps tools such as Cloud Build and Google Container Registry (GCR). We'll create a Cloud … Continue reading Cloud Native DevOps on GCP Series Ep2 – Create a CI/CD pipeline with GKE, GCR and Cloud Build

Cloud Native DevOps on GCP Series Ep1 – Build a GKE Cluster with Terraform

This is the first episode of our Cloud Native DevOps on GCP series. Here we'll be building an Google Kubernetes Engine (GKE) cluster using Terraform. From my personal experience, GKE has been one of the most scalable and reliable managed Kubernetes solution, and it's also 100% upstream compliant and certified by CNCF. For this demo … Continue reading Cloud Native DevOps on GCP Series Ep1 – Build a GKE Cluster with Terraform

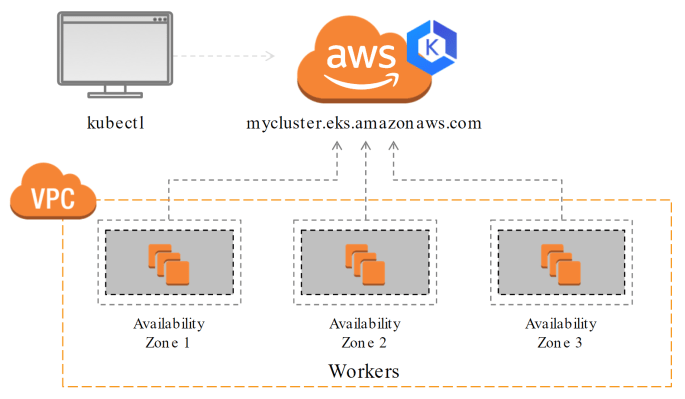

Provision an AWS EKS Cluster with Terraform

In this post we'll provision an AWS Elastic Kubernetes Service (EKS) Cluster using Terraform. EKS is an upstream compliant Kubernetes solution that is fully managed by AWS. I have provided a sample Terraform script at here. It will build a multi-AZ EKS cluster that looks like this: Specifically, we'll be launching the following AWS resources: … Continue reading Provision an AWS EKS Cluster with Terraform