This is the 3rd episode of our NKE lab series. Previously, I have walked through: How to deploy a NKE-enabled Kubernetes cluster in a nested Nutanix CE environment How to provide persistent storage to your NKE clusters using 2x Nutanix CSI options In this episode, we'll deep dive into the NKE networking spaces by exploring … Continue reading NKE lab series – Ep3: Deep dive into NKE networking with Calico CNI

Category: Kubernetes

NKE lab series – Ep2: Deploy a multi-tier web application on a NKE cluster using persistent storage with Nutanix CSI

This is the 2nd episode of our NKE lab series. In the 1st episode, I have demonstrated how you can easily deploy an enterprise-grade NKE cluster in a Nutanix CE lab environment with nested virtualization. In this episode, we'll deploy a containerized multi-tier web application onto our NKE cluster, by leveraging the built-in Nutanix CSI driver … Continue reading NKE lab series – Ep2: Deploy a multi-tier web application on a NKE cluster using persistent storage with Nutanix CSI

Nutanix Kubernetes Engine (NKE) lab series – Ep1: Create a NKE-enabled Kubernetes Cluster on Nutanix Community Edition (CE)

This blog is the 1st episode of a Nutanix Kubernetes Engine (NKE) home lab series. In this post, I will walk through the detailed process of deploying an enterprise-ready NKE-enabled Kubernetes cluster within a Nutanix CE environment. Nutanix CE is a free version of Nutanix AOS, which powers the Nutanix Enterprise Cloud Platform. It is … Continue reading Nutanix Kubernetes Engine (NKE) lab series – Ep1: Create a NKE-enabled Kubernetes Cluster on Nutanix Community Edition (CE)

Enabling embedded Harbor Image Registry in vSphere 7 with Kubernetes

This will be a quick blog to demonstrate how to enable the (embedded) Harbor Image Registry in vSphere 7 with Kubernetes. Harbor was originally developed by VMware as a enterprise-grade private container registry. It was then donated to the CNCF in 2018 and recently became a CNCF graduated project. For this demo, we'll activate the … Continue reading Enabling embedded Harbor Image Registry in vSphere 7 with Kubernetes

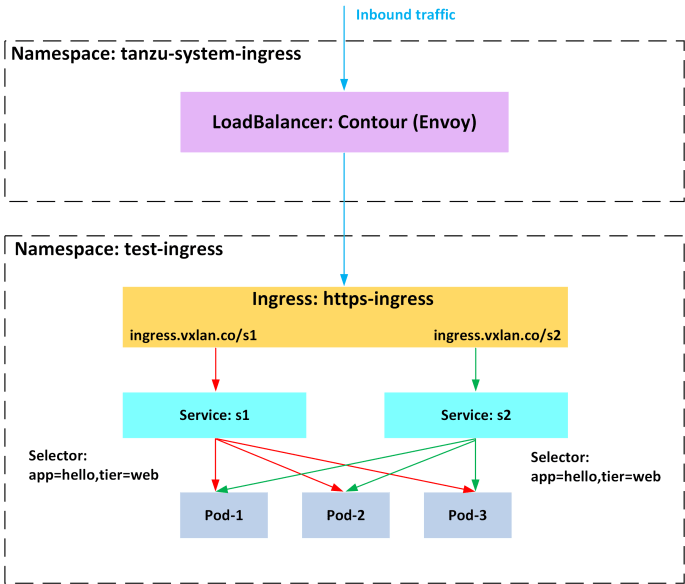

Deploying Contour Ingress Controller on Tanzu Kubernetes Grid (TKG)

This blog provides a guide to help you deploying Contour Ingress Controller onto a Tanzu Kubernetes Grid (TKG) cluster. Contour is an open source Kubernetes ingress controller that exposes HTTP/HTTPS routes for internal services so they are reachable from outside the cluster. Like many other ingress controllers, Contour can provide advanced L7 URL/URI based routing … Continue reading Deploying Contour Ingress Controller on Tanzu Kubernetes Grid (TKG)