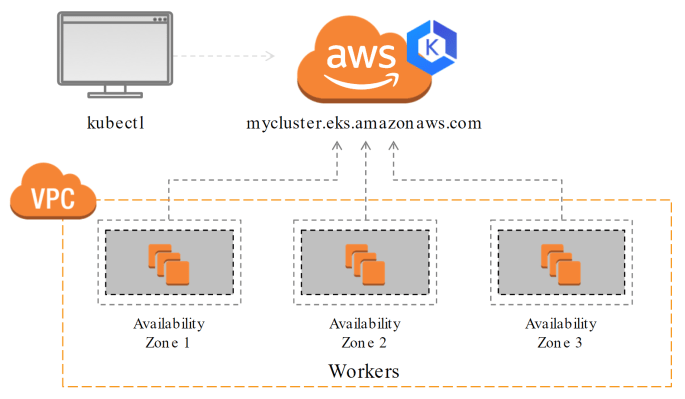

In this post we'll provision an AWS Elastic Kubernetes Service (EKS) Cluster using Terraform. EKS is an upstream compliant Kubernetes solution that is fully managed by AWS. I have provided a sample Terraform script at here. It will build a multi-AZ EKS cluster that looks like this: Specifically, we'll be launching the following AWS resources: … Continue reading Provision an AWS EKS Cluster with Terraform